Data & AI

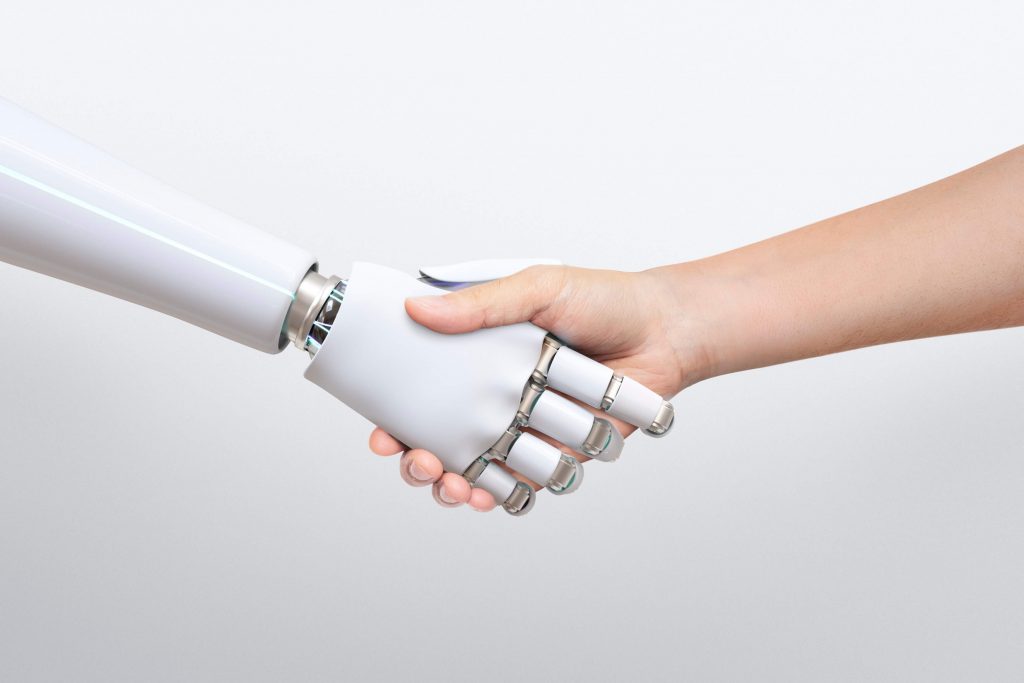

About Data & AI

The Data & AI team brings together the skills and technologies needed to help your organization make the most of data and artificial intelligence. We combine the areas of Business Intelligence & Analytics and Artificial Intelligence to create tailored solutions that directly address your needs.

By combining accurate data, intelligent algorithms, and a data-driven approach we can help you understand and act on information more efficiently. Our goal is to provide a digital transformation that makes your organization more agile, intelligent, and prepared for the future.

Business Intelligence & Analytics

The Business Intelligence & Analytics area helps your organization extract value from data, using technologies such as Big Data, real-time analytics, Master Data Management, data visualization, and Data Governance. Through an integrated approach, we handle data to ensure accuracy, security, and scalability.

We develop tailored solutions, helping your company make more informed decisions aligned with your business objectives. We are here to transform the way you work with data, making you more efficient and focused on concrete results.

Artificial Intelligence

Artificial Intelligence (AI) is the branch of computer science responsible for giving machines human skills to reproduce the most diverse capabilities associated with human intelligence. It enables the creation of systems that learn from data and experiences, allowing them to reason, understand, interpret, predict, plan, optimize, etc.

Not even two decades ago, the use of AI in the business world was only at an “early adoption” stage, with its potential still somewhat theoretical. Since then, AI technologies and applications have evolved rapidly, creating countless innovative applications and amplifying existing ones, generating enormous business value.

Drivers for change

Why Data & AI?

Reduced costs

Automating repetitive processes provides increased efficiency and quality.Maximized revenue

Adding innovative features to your products and services, to attract new customers and increase customer satisfaction.Speed of execution

Minimizing the response time to unexpected factors, in order to overcome obstacles faster and achieve the expected operational results.Minimized complexity

Improving insights and decision-making through more proactive predictive analytics, capable of detecting patterns in highly complex data.Increased productivity

Fostering a more agile interaction between humans and machines, which translates into faster execution of operations or more informed decision-making.Transformed involvement

Transform how your customers interact with your services by offering more transparent and personalized experiences to each one.Anticipating competition

Discovering new innovative products and services, redefining and enhancing business models to gain a more significant market share.Reinforced confidence

Elevate customer confidence in your brand by improving the quality and consistency of your products, while protecting against security risks and fraud.Reduced costs

Automating repetitive processes provides increased efficiency and quality.Maximized revenue

Adding innovative features to your products and services to attract new customers and increase customer satisfactionSpeed of execution

Minimize response times to unexpected factors to overcome obstacles faster, achieving the expected operational results.Minimized complexity

Improving insights and decision-making through more proactive, predictive analytics capable of detecting patterns in highly complex dataIncreased productivity

Fostering a more agile interaction between humans and machines, which translates into faster execution of operations or more informed decision-makingTransformed involvement

Transform how your customers interact with your services by offering more transparent and personalized experiences to each oneAnticipating competition

Discovering new innovative products and services, redefining and enhancing business models to gain a more significant market shareReinforced confidence

Elevate customer confidence in your brand by improving the quality and consistency of your products while protecting against security risks and fraudTechnologies

Data visualization is the visual presentation of information and is one of the crucial tools to enable quick and accurate interpretation of the insights gained through advanced analytics. In this way, it is possible to extract maximum value from these analyses, so that the most appropriate actions can be taken in response to the most competitive markets.

Machine Learning (ML) represents the process in which a machine autonomously learns to do one or more specific tasks, only with access to historical data and the goal it is trying to achieve, in order to optimize a performance metric. This process is made possible by running algorithms that automate the construction of knowledge representation models in order to: make predictions based on historical data, optimize a process under a scenario of uncertainty, extract hidden insights in massive data sets, and classify information according to concise objectives.

Deep Learning (DL) is a branch of Machine Learning (ML) that allows creating complex representations and interactions of huge amounts of data, using non-linear algorithms such as neural networks. The last decade has seen an explosion in the popularity of this technology, which has given rise to numerous innovative applications that were previously not possible. This has been due in large part to the exponential increase in the amount of data and computing power available, which has translated into an amplification of machines’ ability to: predict, classify, interpret, and prescribe – in short, to understand information, and therefore the real world.

Natural Language Processing (NLP) is the branch of artificial intelligence responsible for giving machines the ability to understand, interpret, and manipulate human language. It bridges the gap between human communication and computer understanding, allowing complex human-machine dialogues, extracting context and insight from large amounts of unstructured data, and even simulating the voice of specific human beings.

Computer Vision (CV) is the branch of artificial intelligence that studies the processing of images by a machine. It is responsible for the technology that allows the construction of artificial systems capable of interpreting, analyzing and understanding information from images, video and other multidimensional data. With this technology it is possible to develop countless applications ranging from facial, emotion or license plate recognition, to the autonomous inspection of assembly lines or power distribution towers, to being one of the main catalysts of autonomous driving.

Extract, Transform and Load

The term Extract, Transform and Load (ETL), refers to a system capable of extracting different types of data from different sources, so that they can be transformed and standardized to later be sent to a final repository. It allows you to consolidate an organization's data in a single location in order to: allow quick and easy access to data while ensuring data quality, serve as the basis for developing reports and dashboards to support decision making, allow migration to more modern and faster systems, etc.

Visualization

Data visualization is the visual presentation of information and is one of the crucial tools to enable quick and accurate interpretation of the insights gained through advanced analytics. In this way, it is possible to extract maximum value from these analyses, so that the most appropriate actions can be taken in response to the most competitive markets.

Big Data

Big Data is a term used to characterize data sets of high Volume, Velocity and Variety. Due to these characteristics, known as the 3 V's of Big Data, the complexity of interaction with this data is high, which implies that specialized tools are needed to extract value from its analysis. This value can then be disseminated throughout the organization, reflecting in advantages such as: optimize corporate decision-making and internal processes, drive the creation of more effective marketing strategies, identify market trends, promote a better relationship with customers, support risk management, etc.

Analysis

Data analysis is the process of transforming data, often uninterpretable to humans, into useful insights for the various purposes of an organization. It allows an organization to understand the results of its past actions and initiatives, with the objective of optimizing what is being done in the present, to obtain the best results in the future.

Master Data Management

It consists of a set of practices and technologies that ensure the consistency and accuracy of an organization's essential master data, creating a "single source of truth" for all systems and departments. The goal is to ensure high-quality data for more informed decisions and more efficient operations.

Machine Learning

Machine Learning (ML) represents the process in which a machine autonomously learns to do one or more specific tasks, only with access to historical data and the goal it is trying to achieve, in order to optimize a performance metric. This process is made possible by running algorithms that automate the construction of knowledge representation models in order to: make predictions based on historical data, optimize a process under a scenario of uncertainty, extract hidden insights in massive data sets, and classify information according to concise objectives.

Deep Learning

Deep Learning (DL) is a branch of Machine Learning (ML) that allows creating complex representations and interactions of huge amounts of data, using non-linear algorithms such as neural networks. The last decade has seen an explosion in the popularity of this technology, which has given rise to numerous innovative applications that were previously not possible. This has been due in large part to the exponential increase in the amount of data and computing power available, which has translated into an amplification of machines' ability to: predict, classify, interpret, and prescribe - in short, to understand information, and therefore the real world.

Computer Vision

Computer Vision (CV) is the branch of artificial intelligence that studies the processing of images by a machine. It is responsible for the technology that allows the construction of artificial systems capable of interpreting, analyzing and understanding information from images, video and other multidimensional data. With this technology it is possible to develop countless applications ranging from facial, emotion or license plate recognition, to the autonomous inspection of assembly lines or power distribution towers, to being one of the main catalysts of autonomous driving.

Explainable AI

Explainable Artificial Intelligence (xAI) is the field of research that is dedicated to the study of methods that allow Artificial Intelligence applications to produce results that can be understood and interpreted by humans. Implementing solutions using these methods is one of the key requirements for the responsible use of AI in real organizations, with fairness, interpretability, and accountability.

Natural Language Processing

Natural Language Processing (NLP) is the branch of artificial intelligence responsible for giving machines the ability to understand, interpret, and manipulate human language. It bridges the gap between human communication and computer understanding, allowing complex human-machine dialogues, extracting context and insight from large amounts of unstructured data, and even simulating the voice of specific human beings.

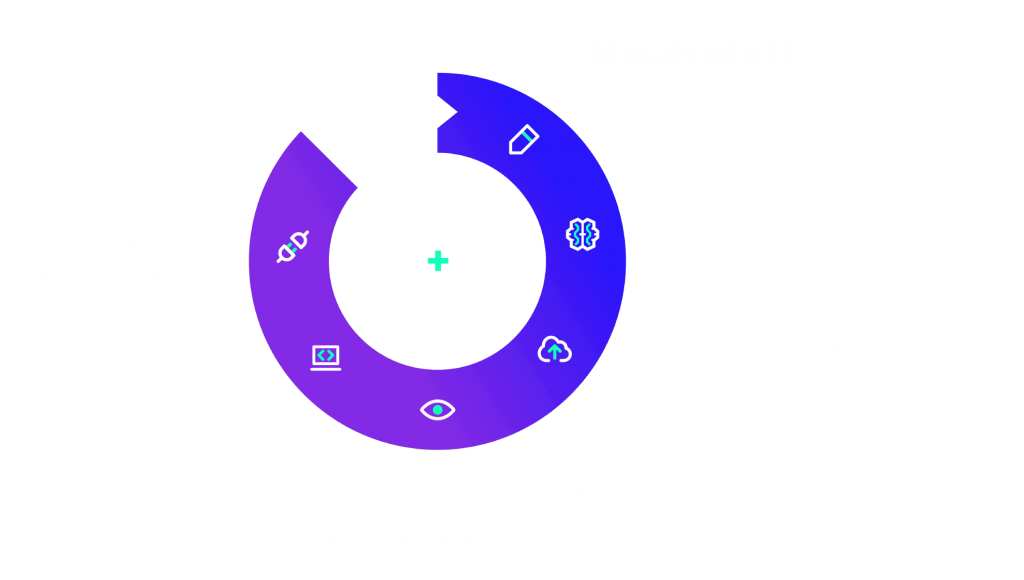

Project Stages

Data & AI

Ideology

Ideology

Data & AI Ideology

The Data & AI team is ready to implement your innovative solution, while supporting you in developing your data culture and transformation strategy for a more intelligent enterprise, through:

- Well-defined processes to ensure the management and quality of your data;

- Rapid integration of existing data and methods;

- Incorporation of practices at the core of your business operations that protect the security of your data;

- Systematic updating of the technology stack, based on a clearly defined criteria, to take advantage of the latest innovations;

- Techniques to ensure solutions are interpretable and scalable;

- Consultation with key users during all phases of design, development and implementation;

- Training programmes to develop the skills of technical and non-technical staff;